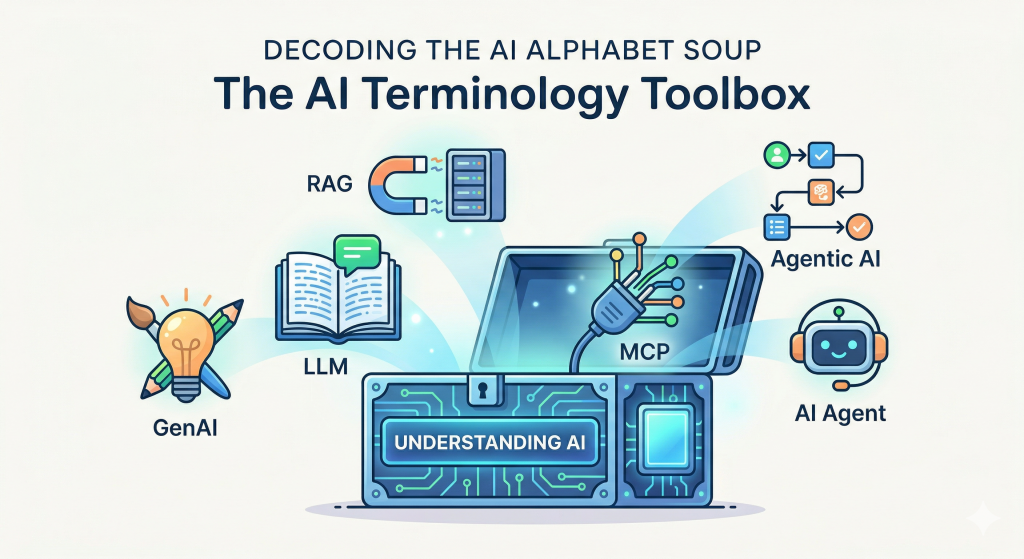

Decoding the AI Alphabet Soup: A Beginner’s Guide to Modern AI Terminologies

If you’ve spent any time on the internet or in a dev standup recently, you’ve probably been hit with a barrage of acronyms: GenAI, LLMs, RAG, MCP, Agents. It sounds like a secret language, and honestly, it can be intimidating.

When I recently spoke with some junior developers, I realized how confusing this terminology can be. You might understand the code, but the high-level concepts can still feel blurry.

If you are trying to navigate the current tech landscape, take courses, or just understand what tools you should be building with, you need to know what these words actually mean. Let’s break them down, free of the heavy jargon.

1. AI (Artificial Intelligence): The Big Umbrella

Think of AI as the parent category for everything else on this list. At its core, Artificial Intelligence is simply a machine or software doing things that typically require human brainpower—like recognizing patterns, translating languages, or making decisions based on data.

The Analogy: AI is like the concept of “Vehicles.” It’s a broad term that includes everything from bicycles to spaceships.

2. GenAI (Generative AI): The Creators

Traditional AI was mostly about analyzing things (e.g., “Is this email spam?” or “Which movie should I recommend next?”). Generative AI is a sub-field of AI that creates brand new content. It can generate text, images, computer code, or even music based on prompts.

The Analogy: If traditional AI is a sharp-eyed editor analyzing a book, GenAI is the author writing a completely new story from scratch.

3. LLM (Large Language Model): The Brain Behind the Text

When you chat with a bot and it responds with incredibly human-like text, you are talking to an LLM. These are massive AI models trained on vast amounts of internet text. They don’t actually “think” the way we do; instead, they are brilliant at predicting the next logical word in a sentence based on all the data they’ve consumed.

The Analogy: Imagine a super-reader who has memorized every book, article, and forum post in the world, and can instantly summarize or mimic any writing style.

4. RAG (Retrieval-Augmented Generation): The Open-Book Test

One big problem with LLMs is that they sometimes confidently make things up (we call this “hallucinating”) because they don’t have access to real-time or private information. RAG fixes this. It’s a technique where the AI first searches your specific, trusted database for the right information, and then generates its answer based on those facts.

The Analogy: Imagine taking an exam. An LLM alone is relying strictly on its memory. RAG is an open-book test—the AI is allowed to look up the exact company handbook or database before writing down its answer.

5. AI Agent: The Doers

Most GenAI just talks to you. You ask a question, it gives an answer, and the interaction ends. An AI Agent goes a step further: it can actually do things. You give it a goal, and it figures out the steps, uses tools (like a web browser, a calculator, or your calendar API), and executes the task.

The Analogy: An LLM is like a smart consultant who gives you advice on how to book a flight. An AI Agent is your personal assistant who actually takes your credit card, browses the web, and books the flight for you.

6. Agentic AI: The Autonomous Team

“Agentic AI” refers to systems that have a high degree of autonomy. Instead of one agent doing one specific task, Agentic workflows involve systems (sometimes multiple agents talking to each other) that can plan, self-correct, and manage complex, multi-step projects over time with very little human intervention.

The Analogy: If an AI Agent is an intern, Agentic AI is an entire department of autonomous workers managing a project from start to finish, only pinging you when they need final approval.

7. MCP (Model Context Protocol): The Universal Plug

This is a newer, slightly more technical term. As developers build AI Agents, they need these AIs to connect securely to local files, databases, and business tools (like Slack or GitHub). MCP is an open standard that makes this easy. Instead of writing custom code to connect your AI to every single tool, MCP provides a standard way for them to talk to each other.

The Analogy: MCP is like the USB-C cable of the AI world. It’s a universal plug that allows the AI “brain” to securely connect to your local data and tools without needing a custom adapter every time.

Tying It All Together

Imagine you want to build a customer service system: You use GenAI (specifically an LLM) to talk to the customer. You use RAG to let the AI read your specific return policies. You turn it into an AI Agent so it can actively process the refund in your system, perhaps connecting to your internal software using MCP. When your entire business runs on these autonomous systems working together, you are operating with Agentic AI.

Welcome to the new era of tech—it’s a lot less intimidating once you know the vocabulary!

Happy Coding 🙂